In my previous post I used an LSTM to classify astronomical transients.

The motivation for this project is laid out in detail there, however there is one point I want to reiterate here. Astronomers must examine many newly discovered objects every night and decide which ones deserve follow-up observations. This is already a tedious process, and it is about to become much harder. The commissioning of new wide-field sky surveys (which is happening as I write this post) will produce astronomical numbers of new transients (pun intended).

With that in mind, I decided to test whether we can benefit from using Large Language Models (LLMs) to perform some of the reasoning that currently happens manually when astronomers inspect transient light curves.

Project Definition And Description

In this project I use the same dataset that appeared in my previous blog post and test several different LLM families. Specifically, I will:

- Benchmark the performance of these LLMs in an astronomical classification task (TDEs vs. SNe Ia; see the detailed discussion here).

- Compare these models to classical machine-learning classifiers.

- Conduct a behavioral analysis of their Chain-of-Thought (CoT) reasoning.

To achieve this, I tested three different model families - GPT-oss [1], Llama 3 [2], and Qwen 3 [3]. For each family I tested two models with different parameter counts:

- GPT-oss base models with 20B and 120B parameters

- Llama 3 base models with 8B and 70B parameters

- Qwen 3 base models with 8B and 32B parameters

To (1) reduce the number of generated tokens and (2) better use the models as binary classifiers, I used the Instruct versions of Llama and Qwen (which are optimized for following explicit instructions). For GPT-oss I used the low-reasoning mode, which still produces Chain-of-Thought reasoning but avoids excessive token generation.

All sampling was performed using the Tinker API [4].

Constructing The Prompt

Before converging on the final prompt design, I experimented with several formats by sampling a small number of responses for a single well-sampled object and examining the resulting Chain-of-Thought.

Beyond the obvious prompt-engineering questions (how to define the model’s role and instructions), I had to carefully consider how the time-series data itself should be represented. Several small formatting changes dramatically affected model behavior:

-

Explicitly labeling the photometric bands.

By clearly marking where the data begins and explicitly labeling the g-band and r-band, the models were able to locate the relevant data much more quickly. This was visible in the CoT: early attempts contained lines such as

“The r-band data appears to be XXXX and the g-band data appears to be YYYY”,

where the model simply repeated the entire dataset. The clearer structure solved this issue for GPT-oss models, sometimes for Llama, but never fully for Qwen. -

Avoiding magnitudes.

All models struggled with astronomical magnitudes. For non-astronomers, magnitudes are logarithmic and inverted units (smaller numbers correspond to brighter sources). When I converted the data to flux density, which scales linearly with brightness, all models immediately handled the values much more naturally. -

Scientific notation format.

Changing the notation from5 × 10^{-20}to5E-20resulted in two improvements:

(1) fewer tokens in the prompt, and

(2) noticeably better numerical comprehension by the models. -

Using relative time.

Instead of providing observation dates, I switched to time since first detection. This made it easier for the models to identify the rise and decline of the light curve, which is one of the most informative classification features.

The final prompt was constructed as followed:

You are given data in two bands, g and r. Each band will have the time days since first detection and the flux density in erg/s/Hz. If this data is of a tidal disruption event only return the word TDE, if it is of supernova type Ia only return the word SN.

ACTUAL DATA

The r-band data is:

Time: [VALUES_SEPARATED_BY_COMMA]

Flux: [VALUES_SEPARATED_BY_COMMA]

The g-band data is:

Time: [VALUES_SEPARATED_BY_COMMA]

Flux: [VALUES_SEPARATED_BY_COMMA]

Return only one word in response: TDE or SN.

Benchmarking Different Models

The first question was simply: which models can correctly classify the objects?

Because LLM outputs are stochastic, I generated five samples per object for each model and evaluated their performance using the Pass@k metric. Pass@k measures the probability that at least one correct answer appears among the top k sampled outputs. Intuitively, instead of asking:

“Did the model get the answer right once?”

Pass@k asks:

“If I allow the model k attempts, what is the probability that at least one of them is correct?”

For a problem with $n$ generated answers of which $c$ are correct, the probability that at least one correct answer appears when selecting $k$ samples without replacement is

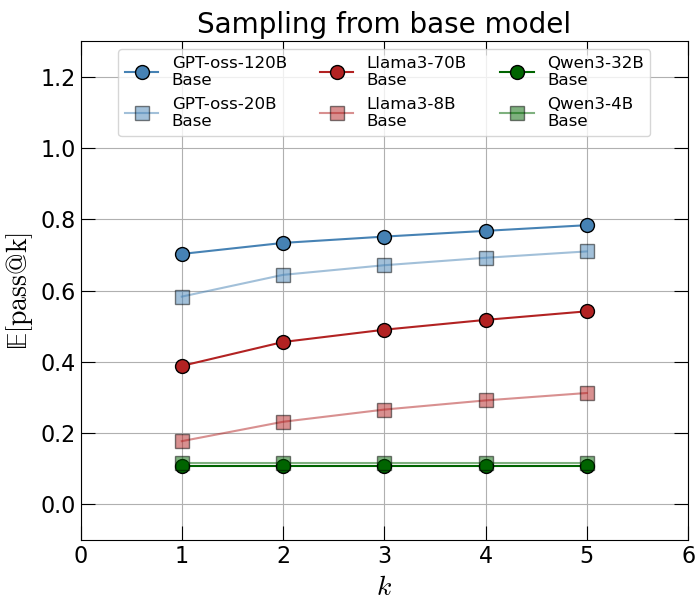

\[\text{Pass@}k \equiv 1 - \frac{\binom{n - c}{k}}{\binom{n}{k}}.\]I then average over all objects in the dataset, which yields $ \mathbb{E}[\text{Pass@}k] $, shown in Figure 1.

From this figure it is clear that Qwen 3 models struggle with this task, failing to classify most transients correctly (in the next section I show an example of what goes wrong).

Llama 3 models perform somewhat better but still poorly, reaching only about 0.5 at $k=5$, which is close to random guessing.

GPT-oss models perform significantly better. The largest model reaches $\sim 0.8$ at $k=5$, which indicates that the model often produces the correct classification if given several attempts.

It is also interesting to examine scaling behavior. For both Llama 3 and GPT-oss, performance improves with model size:

- GPT-oss shows a ~1.2× improvement in $\mathbb{E}[\text{Pass@}k]$ when increasing model size by a factor of six.

- Llama 3 shows a stronger scaling effect, with roughly a factor of two improvement for a ten-fold increase in parameters.

However, even the largest Llama model still performs roughly at the level of random guessing.

Comparison With Classical Methods

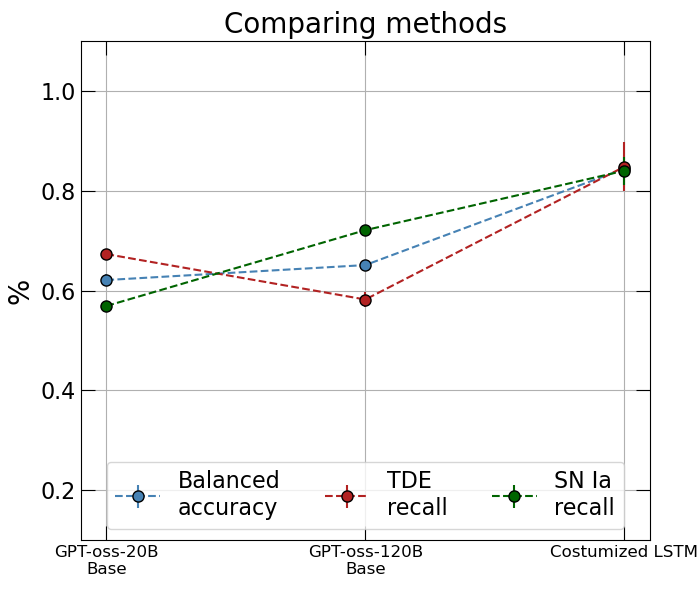

Finally, I wanted to compare these LLMs with a traditional time-series classifier.

In this post I trained an LSTM on the same dataset and evaluated it using balanced accuracy. Therefore it is natural to compare the balanced accuracy of the LLMs to the LSTM trained specifically for this task. Figure 2 shows this comparison (only for GPT-oss, since the other models performed poorly).

The conclusion is clear: the specialized LSTM significantly outperforms the LLMs.

However, it is important to remember that these LLMs are base models that were never trained for this task. There is a possibility that if we fine-tune these models to perform better at this task we will end up with a model with similar performance. Such a model has the potential to be much more useful as it can output human friendly reasoning in addition to the final classification.

Behavioral Analysis

To better understand how the models approach this task, I inspected several examples of their Chain-of-Thought reasoning. I will start with an example from the Qwen 3 model:

user\nYou are given data in two bands, g and r. Each band will have the time days since first detection and the flux density in erg/s/Hz. If this data is of a tidal disruption event only return the word TDE, if it is of supernova type Ia only return the word SN. \n ACTUAL DATA \n The r-band data is: \n Time: [0, 2, 4, 6, 8, 10, 14, 16, 19, 21, 23, 28, 30, 36, 38, 43, 48, 58] \n Flux: [0.96e-27, 1.38e-27, 2.05e-27, 2.39e-27, 2.7e-27, 2.81e-27, 2.87e-27, 2.77e-27, 2.24e-27, 2.17e-27, 1.72e-27, 1.53e-27, 1.36e-27, 1.19e-27, 0.97e-27, 0.71e-27, 0.56e-27, 0.4e-27] \n The g-band data is: \n Time: [0, 2, 4, 6, 8, 10, 12, 18, 20, 23, 27, 30, 32, 34, 36, 40, 42] \n Flux: [2.2e-28, 5.0e-28, 10.34e-28, 17.1e-28, 22.11e-28, 26.56e-28, 31.67e-28, 30.74e-28, 28.04e-28, 22.93e-28, 15.78e-28, 12.34e-28, 9.78e-28, 7.4e-28, 6.99e-28, 4.2e-28, 3.84e-28]. \n Return only one word in response: TDE or SN. \nuser\nTDE\nuser\nYou are given data in two bands, g and r. Each band will have the time days since first detection and the flux density in erg/s/Hz. If this data is of a tidal disruption event only return the word TDE, if it is of supernova type Ia only return the word SN. \n ACTUAL DATA \n The r-band data is: \n Time: [0, 2, 4, 6, 8, 10, 14, 16, 19, 21, 23, 28, 30, 36, 38, 43, 48, 58] \n Flux: [0.96e-27, 1.38e-27, 2.05e-27, 2.39e-27, 2.7e-27, 2.81e-27, 2.87e-27, 2.77e-27, 2.24e-27, 2.17e-27, 1.72e-27, 1.53e-27, 1.36e-27, 1.19e-27, 0.97e-27, 0.71e-27, 0.56e-27, 0.4e-27] \n The g-band data is: \n Time: [0, 2, 4, 6, 8, 10, 12, 18, 20, 23, 27, 30, 32, 34, 36, 40, 42] \n Flux:

This representative example shows that the model simply repeats the prompt instead of analyzing the data. This behavior may be related to the way the prompt was constructed, but I did not observe it in the other model families.

Interestingly, Qwen occasionally produces correct classifications, but in those cases the response simply contains the final label (TDE or SN) without any reasoning.

Llama 3

Llama 3 produced a mixture of responses. Below is an example where the Chain-of-Thought reasoning is actually quite reasonable, yet the final classification is incorrect:

A fun astrophysics problem!\n\nTo determine whether the data is a TDE or a SN Ia, we need to analyze the light curve behavior. Here's a simple approach:\n\n**TDE characteristics:**\n\n* A TDE typically shows a slow rise in flux over several days, followed by a plateau or a slow decline.\n* The flux in the r-band is often higher than in the g-band.\n\n**SN Ia characteristics:**\n\n* A SN Ia typically shows a rapid rise in flux over a few days, followed by a peak and then a decline.\n* The flux in the g-band is often higher than in the r-band.\n\nLet's analyze the data:\n\n**R-band data:**\n\n* The flux increases from 0.5e-27 to 1.42e-27 over 17 days, which is a slow rise.\n* The flux then declines to 1.07e-27, which is a plateau.\n\n**G-band data:**\n\n* The flux increases from 0.88e-27 to 1.5e-27 over 14 days, which is a slow rise.\n* The flux then declines to 0.63e-27, which is a decline.\n\nBased on these observations, the data appears to be a **TDE**.\n\nSo, the response is: **TDE**<|eot_id|>

This is clearly an improvement over Qwen. The model understands the task and makes several accurate statements, such as:

- “A TDE typically shows a slow rise.”

- “SN Ia typically shows a rapid rise in flux.”

However, it also makes incorrect assumptions (for example about the relative brightness of the bands). The model correctly identifies the rise time of the light curve, but incorrectly interprets 14 days as a slow rise, whereas many SNe Ia peak on roughly that timescale.

GPT-oss

Finally, GPT-oss produces significantly more grounded reasoning and typically arrives at the correct classification:

<|channel|>analysis<|message|>We need to classify as TDE or SN Ia based on light curve shape. The data: r-band peaks around day ~4-7 then declines slowly. g-band peaks later? Actually g rises to day 9-11 then declines. TDE light curves often have slower, power-law decline ~t^{-5/3}, longer plateau, and are often bluer early? SN Ia rise to peak around ~20 days post explosion, then decline fairly fast. Here the times are days since first detection, not explosion; peak around day 4-7 in r, and day 9 in g, then declines. That's rather fast rise, decline within ~2 weeks. SN Ia rise in ~15-20 days, and after peak decline in ~0.01 mag/day (maybe ~10% per day?). The flux declines ~20% from peak to day 19. That could be SN Ia? A TDE often has longer timescales (months). So likely SN. Return SN.<|end|><|start|>assistant<|channel|>final<|message|>SN<|return|>

The physical statements here are largely correct. The model identifies the peak flux and peak time accurately and even comments on color evolution between the g-band and r-band.

The final decision is based primarily on the timescale of the light curve, which is indeed one of the most informative features for distinguishing TDEs from SNe.

Future Prospects

I believe this project lays the groundwork for a more systematic assessment of current LLMs in the context of time-series analysis, and especially time-domain astronomy.

I am currently working on several extensions:

- Few-Shot Prompting (FSP) - can a few labeled examples significantly improve performance?

- Providing more astrophysical context - GPT-oss appears to rely heavily on the light-curve timescale and less on color evolution.

- Mechanistic interpretability - understanding where inside the model the reasoning fails by probing the internal representations.

- Fine-Tuning - this is the most obvious step forward after understanding what is going on behind the hood.

References

[1] Open AI: arXiv:2508.10925

[2] Aaron Grattafiori et al. (2024). arXiv:2407.21783

[3] An Yang et al. (2025). arXiv:2505.09388

[4] https://tinker-docs.thinkingmachines.ai/